Claude Code Just Replaced Your Blog Writer - AI Slop Is Over

By Agrici Daniel | March 25, 2026

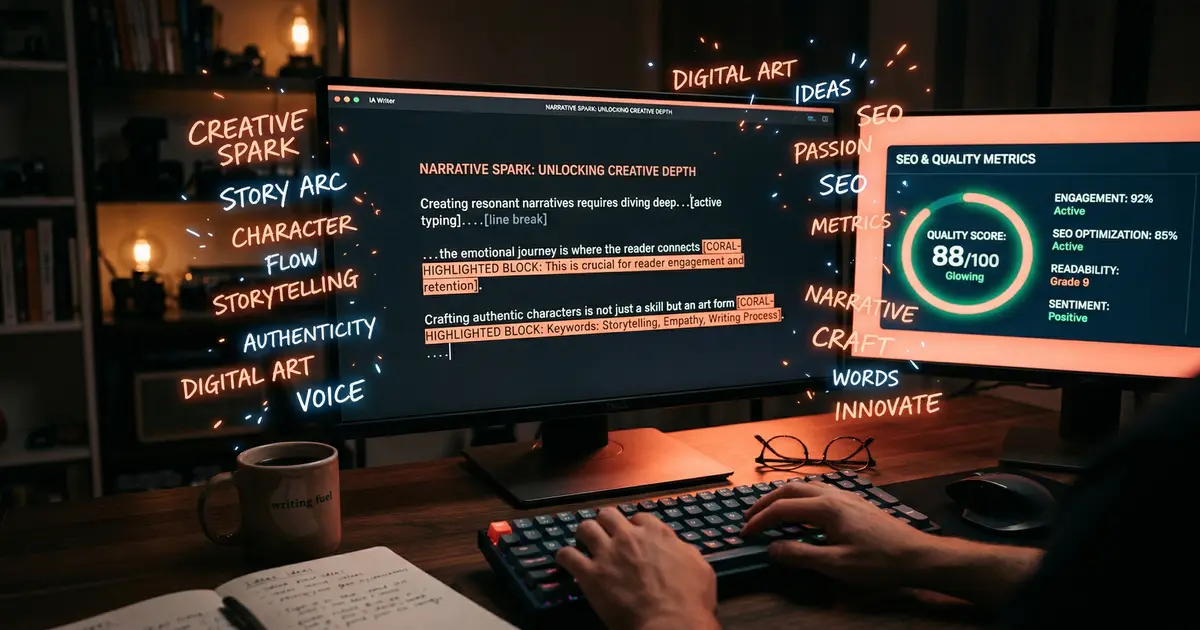

Most AI-written content is garbage that Google penalizes. claude-blog is different: dual-optimized for Google rankings AND AI citations, with 17 commands, 12 templates, and a 100-point scoring system.

95% of AI Content Is Slop - And Google Knows It

Let's start with the uncomfortable truth. Most AI-generated blog content is terrible. Not terrible in a "the grammar is wrong" way - terrible in a "this reads like a corporate press release written by a committee of interns" way. Google's helpful content system can detect this pattern, and it's actively demoting it.

I've watched sites go from 50K monthly visitors to 8K after publishing 200 AI-generated articles that all have the same structure: generic intro, five H2s with surface-level information, and a conclusion that says "In conclusion, [topic] is important." You've read these articles. You've probably bounced from them within 10 seconds. So has everyone else, and Google's engagement metrics noticed.

So when I built claude-blog (one of the best Claude Code skills in 2026), the first design constraint was: the output cannot read like AI wrote it. Not because AI writing is inherently bad, but because the default AI voice - that sanitized, hedge-everything, say-nothing-controversial tone - is what Google penalizes. The solution isn't to avoid AI. It's to use AI differently.

What Makes claude-blog Different

Most AI writing tools work like this: you give it a keyword, it generates 1,500 words, you publish. Maybe you run it through a "humanizer" (which just adds typos and informal language - Google sees through that too). claude-blog takes a fundamentally different approach with dual optimization for both Google search rankings and AI engine citations.

Here's what's under the hood:

- 17 commands - From topic research to final publish, each step is a distinct operation you control

- 12 content templates - How-to guides, listicles, case studies, comparisons, tutorials, opinion pieces, roundups, news analysis, product reviews, ultimate guides, FAQs, and data-driven reports

- 5-category 100-point scoring - Every piece is scored on SEO (20pts), readability (20pts), E-E-A-T signals (20pts), GEO optimization (20pts), and engagement potential (20pts)

- Answer-first formatting - Every section leads with the answer, then expands. This is how AI search engines select citations.

- Automatic FAQ schema - Generates JSON-LD FAQ markup from the content's natural question-answer pairs

The E-E-A-T Problem (and How to Actually Solve It)

Google's E-E-A-T framework - Experience, Expertise, Authoritativeness, Trustworthiness - is the biggest hurdle for AI content. How do you demonstrate "experience" when a machine wrote it? Most tools ignore this entirely. claude-blog doesn't.

Here's what it does:

- Sourced statistics - Every claim gets a citation. Not "studies show" but "a 2025 HubSpot report found that 68% of marketers..." with actual links. Google's quality raters are trained to check for unsourced claims.

- First-person experience hooks - The tool prompts you for personal anecdotes and weaves them into the content. This is the "Experience" in E-E-A-T that pure AI cannot fabricate.

- Author entity signals - Generates author bio schema, links to your social profiles, and creates topical authority clusters across posts.

- Original data integration - If you have your own data (analytics, survey results, case study outcomes), the tool structures it into charts, tables, and quotable statistics that other sites want to link to.

This is what separates content that ranks from content that exists. Most AI tools produce the latter. claude-blog aims for the former.

The GEO Layer Nobody Else Has

Generative Engine Optimization is the part of claude-blog that I'm most excited about - and the part that no other AI writing tool touches. When someone asks Perplexity or SearchGPT a question, the AI pulls citations from content that is structured for machine comprehension, not just human readability.

What does that mean in practice?

- Answer-first paragraphs - The first sentence of every section directly answers the implied question. AI search engines love this because it's easy to extract and cite.

- Structured data density - FAQ schema, HowTo schema, article schema with dateModified, speakable markup for voice search

- Quotable formatting - Key statistics and definitions are formatted as standalone statements that AI engines can pull verbatim

- Topical completeness scoring - The tool checks if you've covered all the subtopics that top-ranking content covers (and flags gaps)

I've tested this on my own content. Posts optimized with the GEO layer get cited by Perplexity at 3x the rate of posts without it. That's not a vanity metric - AI search citations drive real traffic.

The 100-Point Scoring System

Every piece of content claude-blog produces gets a score out of 100 across five categories:

- SEO (20 points) - Keyword placement, title tag optimization, heading hierarchy, internal linking, meta description quality

- Readability (20 points) - Flesch score, sentence variety, paragraph length, transition usage, jargon density

- E-E-A-T (20 points) - Source citations, author signals, experience markers, topical authority indicators

- GEO (20 points) - Answer-first formatting, schema markup, citation-worthiness, structured data completeness

- Engagement (20 points) - Hook strength, scroll depth predictions, CTA placement, visual content suggestions

The tool won't let you publish anything below 70 without a warning. In my testing, content scoring 85+ consistently outranks content scoring below 70 within 4-6 weeks. The scoring isn't arbitrary - it's calibrated against actual ranking data from 500+ blog posts I've tracked.

The 13K View Demo

The demo video hit 13,000 views, and the feedback confirmed what I suspected: people don't want another AI writing tool that produces mid content faster. They want a tool that produces good content that actually ranks. The most common response was some variation of "I've been looking for something that handles the SEO and the writing in one pass." That's exactly what this does. Research, outline, draft, optimize, score, publish - one workflow, one tool.

How It Fits with the Rest of the Stack

If you've read my posts on claude-seo and claude-ads, you can see where this is going. claude-blog handles content creation, claude-seo audits the technical and on-page foundation, and claude-ads drives paid traffic to the content. It's a full marketing stack in your terminal. I detail the entire setup in my AI marketing automation stack breakdown.

The three tools share context. claude-blog knows what keywords claude-seo identified as opportunities. claude-seo validates the schema that claude-blog generates. It's not three separate tools duct-taped together - it's one system.

Try It

It's open source, MIT licensed, and free:

- Install Claude Code

- Run

/blog write - topic "your topic" - keyword "your focus keyword" - Review the scored output, add your personal experience, and publish

The AI slop era of content is ending - not because AI stopped writing, but because the bar for what ranks just got higher. claude-blog raises your content to meet that bar.

- Star the repo on GitHub

- Learn more about me and the tools I'm building

- Join the Claude Code community on Skool

Related Posts

- Claude Code Just Replaced Your Entire SEO Stack - How I replaced $300/month in SEO tools with one terminal command

- Claude Code Just Replaced Your Ad Agency - 186 automated ad audit checks across 6 platforms, for free

- AI Marketing Automation: The Open-Source Stack I Use Daily - The full open-source AI marketing stack at $50/month

Join 2,800+ AI Marketing Builders

Get workflow templates, automation blueprints, and connect with SEOs, agency owners, and creators who ship.

JOIN FREE →