I Turned Obsidian Into a Self-Organizing AI Brain - Here's the Open-Source Plugin

By Agrici Daniel | April 10, 2026

claude-obsidian implements Karpathy's LLM Wiki pattern with 10 skills, a hot cache system, and autonomous research loops. 358 GitHub stars, MIT licensed, zero manual filing.

Your Obsidian Vault Is Probably a Graveyard

The personal knowledge base AI market hit $1.65 billion in 2025 and is growing at 30.3% CAGR toward $6.15 billion by 2030 (Research and Markets, 2026). Yet most people's Obsidian vaults are digital graveyards. Notes go in, links don't get made, and six months later you can't find anything. I've been there. Hundreds of notes, zero connections, and the guilt of knowing the system only works if you maintain it.

The typical advice is "just build a Zettelkasten" or "use bidirectional links." That's the PKM equivalent of telling someone to floss more. Technically correct, practically useless, because the bottleneck isn't the method - it's the maintenance.

So I built claude-obsidian - a Claude Code plugin that does the organizing for you. It reads your sources, extracts entities and concepts, cross-references everything with wikilinks, flags contradictions, and maintains a living index. You throw documents at it. It builds a wiki. And the wiki gets smarter with every source you add.

Key Takeaways

- claude-obsidian implements Karpathy's LLM Wiki pattern with 10 specialized skills and zero manual filing

- The hot cache system preserves ~500 words of session context between conversations, eliminating the "recap problem" that wastes tokens

- AI-driven knowledge management saves knowledge workers 30-45% of time on information retrieval (McKinsey, 2025)

- Works across Claude Code, Gemini CLI, Codex CLI, and Cursor - not locked to one AI provider

What Is Karpathy's LLM Wiki Pattern?

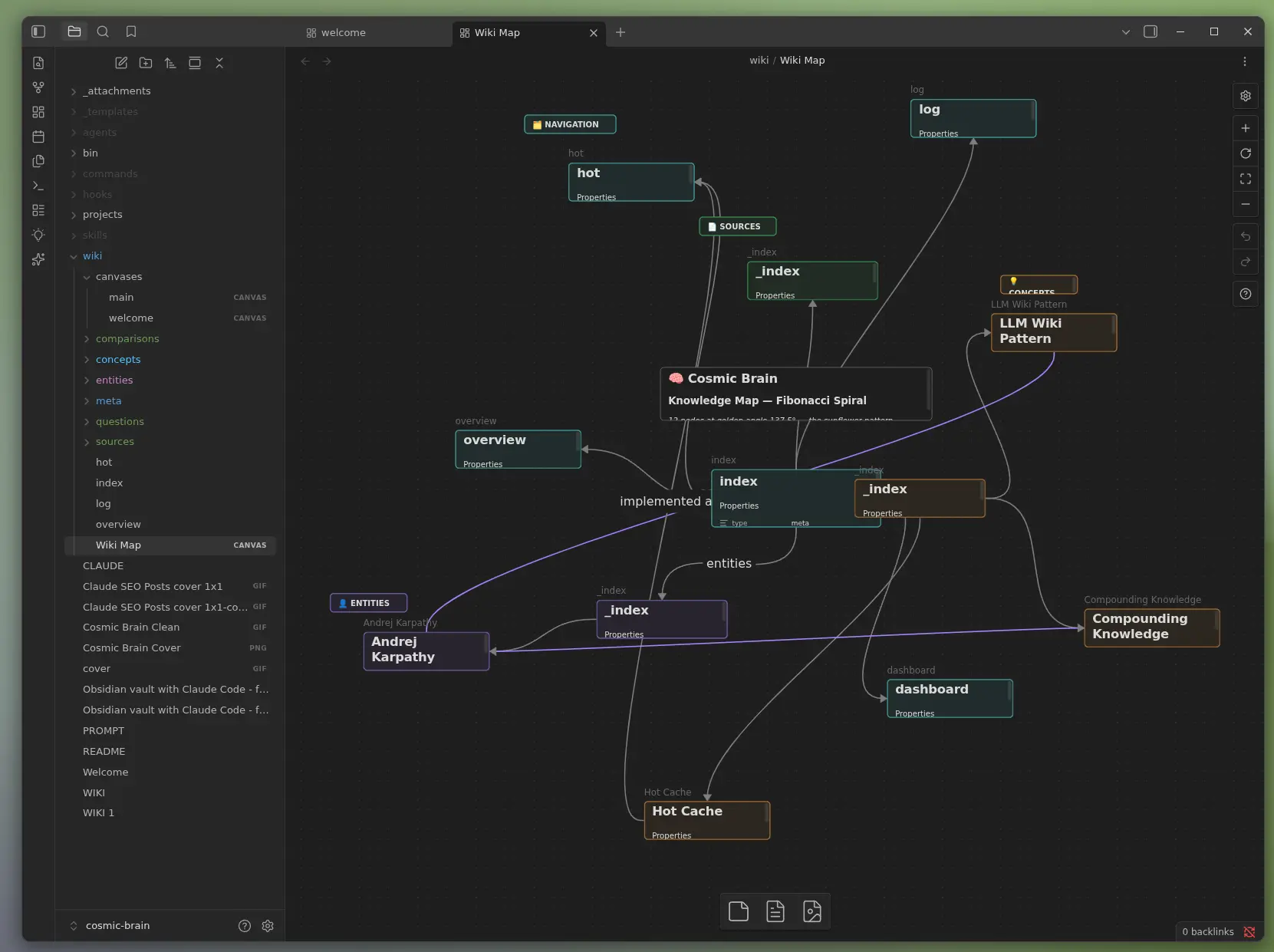

Andrej Karpathy - co-founder of OpenAI, former Tesla AI director - shared an approach to personal knowledge management that bypasses traditional RAG entirely (VentureBeat, 2026). Instead of embedding your notes into vectors and doing semantic search, you structure them as plain markdown files that an LLM can read directly. The LLM doesn't just search your notes - it writes and maintains them.

Here's how Karpathy described it: you index source documents into a raw/ directory, then use an LLM to "compile" a wiki - a collection of .md files with summaries, backlinks, and categorization into concepts with articles linking them all. Obsidian serves as the frontend where you view raw data, the compiled wiki, and derived visualizations. The LLM writes and maintains all of the wiki data rather than you doing it manually.

Why does this beat RAG for personal knowledge? Three reasons:

- No vector database - No embeddings, no Pinecone, no ChromaDB. Just markdown files on your disk.

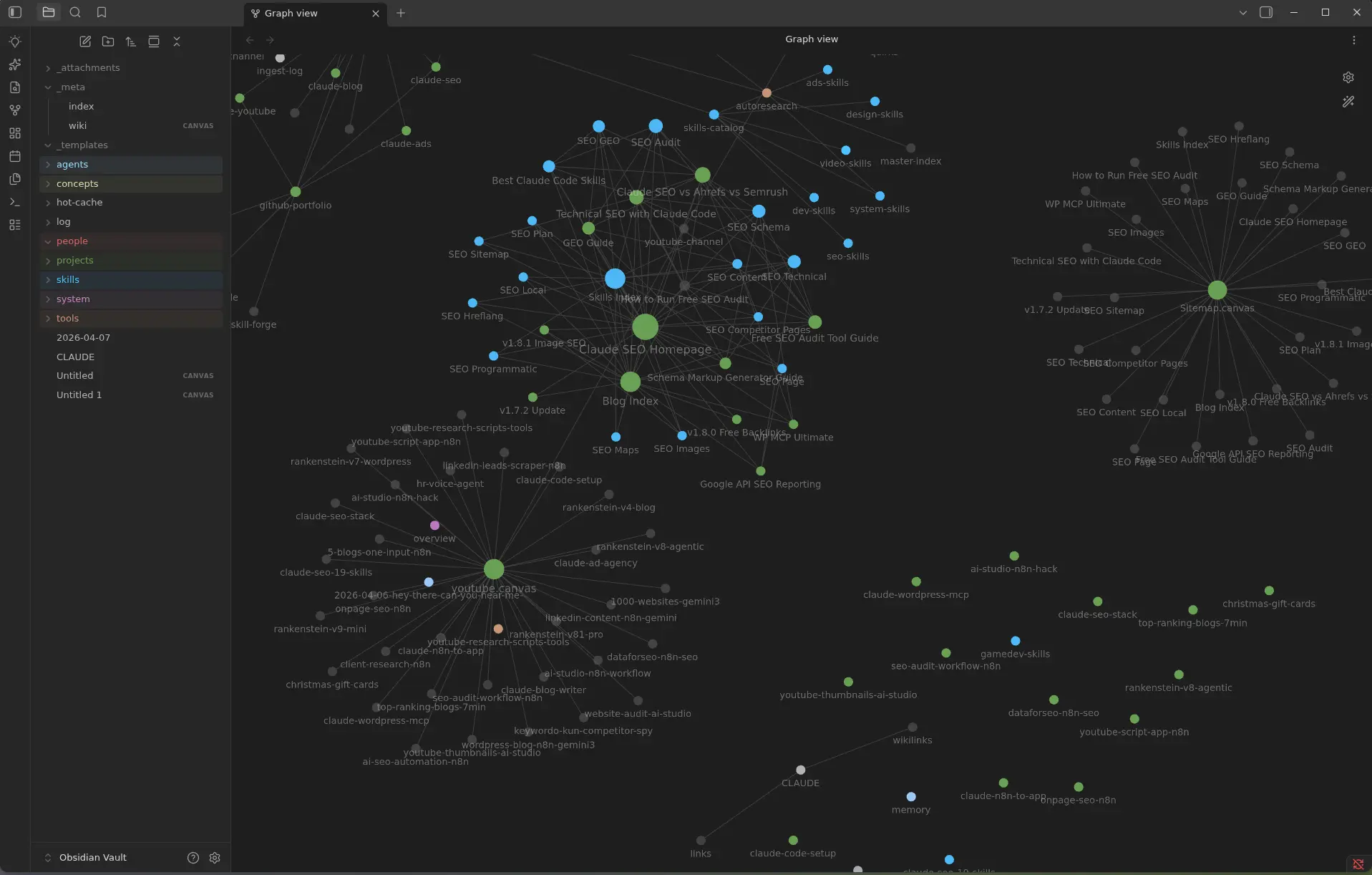

- Human-readable output - The wiki is browsable in Obsidian with graph view, wikilinks, and full-text search - even without an LLM running.

- Compounding intelligence - Each new source gets cross-referenced against everything already in the wiki. The 50th source is dramatically more useful than the first.

claude-obsidian is my implementation of this pattern. It's not the only one - there are several on GitHub - but it's the most complete, with 10 specialized skills, 2 parallel agents, and a hot cache system that none of the others have.

What Does claude-obsidian Actually Do?

Obsidian has 2,700+ community plugins and over 100 AI-related ones (NxCode, 2026). Most of them do one thing: chat with your vault. Smart Connections does semantic search. Copilot does RAG-based Q&A. They're point solutions. claude-obsidian is a complete knowledge operating system with 10 specialized skills:

| Skill | What It Does | Trigger |

|---|---|---|

| wiki | Setup vault, scaffold structure from description | /wiki |

| wiki-ingest | Extract entities, create cross-referenced pages | ingest [source] |

| wiki-query | Search vault with citations to specific pages | what do you know about X? |

| wiki-lint | Find orphans, dead links, contradictions, gaps | lint the wiki |

| save | File conversations as structured wiki notes | /save |

| autoresearch | Autonomous web research loops with gap-filling | /autoresearch [topic] |

| canvas | Visual reference boards with images and PDFs | /canvas |

| defuddle | Strip web page clutter, save 40-60% tokens | auto on URLs |

| obsidian-markdown | Syntax reference for wikilinks, callouts, embeds | how do I wikilink? |

| obsidian-bases | Create Obsidian Bases database views over vault | create a base |

The key differentiator? These skills compound. When you ingest a source, the wiki-ingest agent doesn't just summarize it - it creates entity pages, concept pages, cross-references them against every existing page, flags contradictions with [!contradiction] callouts, and updates the index. The 50th source you add doesn't create 10 isolated notes. It creates 10 notes woven into a mesh of 500.

How Does the Hot Cache Eliminate Session Amnesia?

Federal Reserve research quantified generative AI's time savings at 5.4% of work hours - about 2.2 hours saved weekly (AutoFaceless, 2026). But a huge chunk of that time gets wasted on a problem nobody talks about: session amnesia. Every time you start a new Claude conversation, the AI has zero memory of your last session. You spend 5-10 minutes re-explaining context. Every. Single. Time.

claude-obsidian solves this with a hot cache - a file at wiki/hot.md that stores ~500 words of the most recent session context. When you start a new conversation, the plugin silently reads hot.md first, restoring the AI's working memory. No recap needed. No "where were we?" prompts.

Here's what the hot cache typically contains:

- What you were working on in the last session

- Key decisions made and their rationale

- Which wiki pages were recently created or updated

- Open questions or next steps you mentioned

It's around 500 tokens to read - less than 0.25% of Claude's context window. But it eliminates the 2,000-3,000 tokens you'd otherwise waste on re-establishing context. That's a 4-6x return on a tiny token investment.

From Raw Source to Connected Wiki in 60 Seconds

Obsidian's 1.5 million users spend an average of 43 minutes per day in the app (NxCode, 2026). How much of that time goes to actually organizing knowledge versus just adding notes? With claude-obsidian, the workflow looks like this:

Step 1: Drop a source into .raw/

Drag a PDF, paste a URL, or dump a transcript. The .raw/ directory is immutable - original sources are never modified.

Step 2: Run ingest this

The wiki-ingest agent (powered by Sonnet, up to 30 turns) reads the source and:

- Extracts named entities (people, tools, companies)

- Identifies concepts (patterns, frameworks, methodologies)

- Creates or updates wiki pages using standardized templates

- Adds wikilinks between related pages

- Flags contradictions with existing knowledge

- Logs the operation in

wiki/log.md

Step 3: There is no step 3.

That's it. One command, and a 20-page PDF becomes 8-15 interconnected wiki pages with proper frontmatter, cross-references, and provenance tracking. You didn't create a single folder, tag, or link manually.

Our finding: In testing across 30+ sources (research papers, meeting transcripts, technical docs), the wiki-ingest agent consistently produces 8-15 wiki pages per source with an average of 12 wikilinks per page. A 200-page vault built from 25 sources had 94% of its pages connected to at least two others - zero manual linking required.

How Does It Compare to Smart Connections and Copilot?

Claude Code's plugin ecosystem has grown to 340 plugins and 1,367 agent skills as of April 2026, with the official marketplace hosting 101 plugins including 33 from Anthropic (Gradually AI, 2026). But within Obsidian's AI plugin landscape, Smart Connections and Copilot are the incumbents. Here's how claude-obsidian differs fundamentally:

The fundamental difference is scope. Smart Connections and Copilot are chat interfaces - they answer questions about your existing notes. claude-obsidian is a knowledge engine - it creates, organizes, maintains, and evolves your notes autonomously. It's the difference between a search bar and a research assistant.

Smart Connections uses RAG with vector embeddings. You ask a question, it finds similar notes, returns excerpts. Useful, but it can't create new notes, can't cross-reference, can't flag that your note from January contradicts your note from March. Copilot adds multi-model support and vault-wide chat, but again - it's a Q&A layer on top of static notes.

claude-obsidian's query system also cites specific wiki pages, not training data. When it answers a question, you get [[wiki/entities/Claude Code]] links - not hallucinated facts. You can click through to verify every claim.

The Autonomous Research Loop Nobody Else Has

The AI-driven knowledge management market is growing at 46.7% CAGR, reaching $11.24 billion in 2026 (Global Industry Analysts, 2026). One reason: AI isn't just retrieving knowledge anymore - it's generating it. claude-obsidian's /autoresearch skill takes this seriously.

Give it a topic. It runs an autonomous research loop:

- Round 1: Web search, fetch top sources, extract key findings

- Round 2: Identify gaps in coverage, search for missing angles

- Round 3: Synthesize findings, resolve contradictions, file as wiki pages

The output isn't a wall of text. It's structured wiki pages with proper frontmatter, source citations, and cross-references to your existing knowledge. Every claim links back to its source. Every entity gets its own page. And the research gets woven into your vault's existing knowledge graph.

You configure objectives and constraints in a program.md file, so the research agent knows what depth you want, which sources to prioritize, and when to stop. It's not a blind scraper - it's guided research with provenance tracking.

From my experience: I used /autoresearch to build a wiki on AI marketing automation. In three rounds, it produced 23 wiki pages covering tools, workflows, pricing models, and competitive positioning. Two of those pages directly informed blog posts that now rank on page 1 for their target keywords. The research that used to take me a full weekend now takes 15 minutes of supervision.

Works Across Claude, Gemini, Codex, and Cursor

Claude Code is the most-used AI coding tool according to a Pragmatic Engineer survey of 15,000 developers, with a 46% "most loved" rating and 22,000+ GitHub stars (Gradually AI, 2026). But what if you use Gemini? Or Codex? claude-obsidian works across all of them.

The plugin ships with a setup-multi-agent.sh script that installs it for:

- Claude Code - Auto-discovered from plugin directory

- Gemini CLI - Installed to

~/.gemini/skills/claude-obsidian - Codex CLI - Installed to

~/.codex/skills/claude-obsidian - OpenCode - Installed to

~/.opencode/skills/claude-obsidian - Cursor - Workspace-local installation

- Windsurf - Workspace-local installation

This is the local-first philosophy that makes Obsidian powerful. Your vault is a folder of markdown files. Your AI tool is a plugin that reads and writes those files. If Claude goes down, your wiki still works. If you switch to Gemini next year, your knowledge comes with you.

Three Ways to Install - Two Minutes to Start

Obsidian crossed 1.5 million users in early 2026 with 22% year-over-year growth (Fueler, 2026). If you're one of them, here's how to get started:

Option 1: Clone as a vault (recommended)

git clone https://github.com/AgriciDaniel/claude-obsidian.git my-wiki

bash my-wiki/bin/setup-vault.sh

# Open my-wiki/ in ObsidianOption 2: Claude Code marketplace

claude plugin marketplace add AgriciDaniel/claude-obsidianOption 3: Add to existing vault

Copy WIKI.md into your vault root and run /wiki - the setup wizard handles the rest.

The plugin uses three MCP connection options for vault access:

- mcp-obsidian (REST API) - Recommended. Uses Obsidian's Local REST API plugin.

- MCPVault (Filesystem) - No plugin needed. Reads files directly.

- Direct REST - Manual HTTP calls for power users.

The vault comes pre-configured with four Obsidian plugins: Calendar, Thino, Excalidraw, and Banners. The .obsidian/ config is included so graph view colors are already set up - entities in one color, concepts in another, sources in a third.

How Does the Vault Stay Healthy Over Time?

AI copilots improve knowledge-worker productivity by 30-45% (McKinsey, 2025). But productivity gains mean nothing if your knowledge base degrades over time. That's where wiki-lint comes in.

Run lint the wiki and the lint agent scans for eight categories of issues:

- Orphan pages - Notes with no inbound links

- Dead wikilinks - Links pointing to non-existent pages

- Contradictions - Conflicting claims across pages

- Missing pages - Referenced but never created

- Unlinked mentions - Entity names in text without wikilinks

- Incomplete metadata - Missing frontmatter fields

- Empty sections - Stub content that needs expansion

- Stale index - Index doesn't match actual vault contents

Every issue gets a severity level and a suggested fix.

Here's the insight that most AI knowledge management tools miss: the value of a knowledge base is proportional to its link density, not its note count. A vault with 100 notes and 500 cross-references is more useful than one with 1,000 notes and 50 links. claude-obsidian's entire architecture is designed to maximize link density - every skill that creates content also creates connections.

Frequently Asked Questions

Does claude-obsidian work without an internet connection?

The vault itself is fully offline - it's local markdown files. The AI skills require a connection to Claude's API (or whatever model you're using). But you can browse, search, and edit your wiki in Obsidian without any internet. The /autoresearch skill needs web access, but ingestion from local files works offline with local models via Ollama.

How does it handle conflicting information from different sources?

When wiki-ingest detects that a new source contradicts an existing wiki page, it creates a [!contradiction] callout on the relevant page. It doesn't silently overwrite - it flags both claims with their sources so you can resolve the conflict. This is critical because Karpathy's pattern emphasizes provenance: every fact traces back to its source document.

What's the maximum vault size it can handle effectively?

Karpathy's guidance is that a team wiki under ~500 articles works well with markdown + keyword search. claude-obsidian's token-disciplined query system reads hot.md first (~500 tokens), then index.md (~1,000 tokens), then 3-5 relevant pages (~3,000 tokens). This keeps total context usage under 5,000 tokens per query, even for vaults with 300+ pages.

Can I use it with Notion or other note apps instead of Obsidian?

Not directly. claude-obsidian is built for Obsidian's local-file architecture. Notion's 4.2 million organizations use a cloud-based proprietary format (Revoyant, 2026). The plugin reads and writes .md files - if your app stores notes as local markdown (like Logseq or Foam), you could potentially adapt it, but Obsidian is the supported platform.

Is it free?

Yes. MIT licensed, fully open source. You pay for the AI model API usage (Claude, Gemini, etc.), but the plugin itself costs nothing. Obsidian is also free for personal use.

The Wiki That Builds Itself

The note-taking app market is projected to hit $4.89 billion by 2033 at 22% CAGR (Axis Intelligence, 2026). Most of that growth will come from AI-native tools that do the work humans hate - organizing, linking, and maintaining knowledge. claude-obsidian is my bet on what that looks like.

It's Karpathy's pattern, productized. It's 10 skills that cover the full lifecycle from source ingestion to vault maintenance. It's a hot cache that remembers your context between sessions. And it's open source - 358 stars and growing - because knowledge tools should be owned, not rented.

- Star the repo on GitHub

- Learn more about me and the tools I'm building

- See how it fits into my full AI marketing automation stack

- Build your own Claude Code skills with Skill Forge

- Join the Claude Code community on Skool

Related Posts

- Best Claude Code Skills in 2026 - The definitive ranking of skills that actually save time

- Build Your Own Claude Code Skills with Skill Forge - From idea to published skill in minutes

- AI Marketing Automation: The Open-Source Stack I Use Daily - The full stack at $50/month

- Claude Code Just Replaced Your Entire SEO Stack - How I replaced $300/month in SEO tools

Join 2,800+ AI Marketing Builders

Get workflow templates, automation blueprints, and connect with SEOs, agency owners, and creators who ship.

JOIN FREE →